Noticias

28 of April,2025

Data Protection in the era of AI-powered marketing (VI). Sixth edition. Assessing GDPR compliance in AI-CRM and chatbots for marketing

Por: Paola Cardozo Solano - PhD Researcher & Lecturer. Vrije Universiteit (VU) Amsterdam, The Netherlands

In this edition, we explore how well the principles of the General Data Protection Regulation (GDPR) are protected through the lens of data protection by design by AI-driven customer relationship management (CRM) systems and chatbot software, tools that are extensively used in marketing and that process vast amounts of personal data.

To assess compliance, we begin by introducing the selected companies at the forefront of these technologies. Next, we outline the documentation used as the basis for evaluation. Finally, we present a structured set of yes/no questions corresponding to each GDPR principle, designed to reveal how data protection by design is embedded in practice.

The central aim is to uncover the specific design features these software providers offer their clients to support GDPR compliance. By shedding light on these mechanisms, we hope to contribute to a deeper understanding of how data protection is being operationalized in contemporary AI-powered services.

The survey

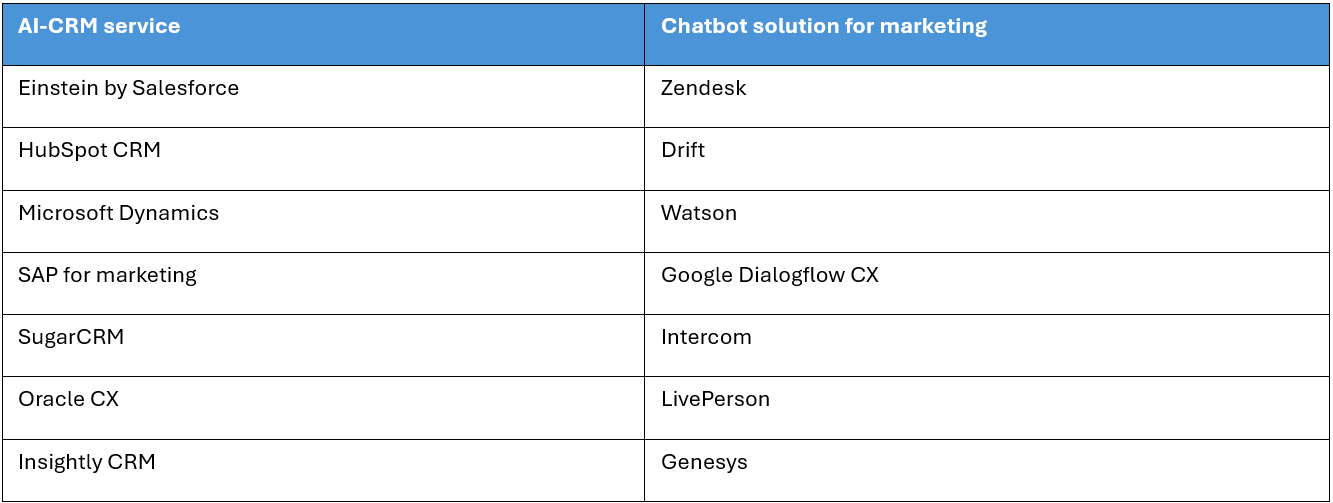

Following Michels et al. (2020), the CRM and chatbot providers featured in the upcoming charts were selected through a review of industry reports and market analyses, ensuring our focus remains on the leading solutions in the field.

Figure 1. Overview of AI-CRM and chatbots included in the 2023 survey. Information sourced from AI Multiple (2023), Insightly (s. f.), and TechTarget (2022a) (2022b), Gartner(2023), and Forbes(2023).

The information used to evaluate compliance here consists of the contract, agreement, or binding legal act —published on the websites of each CRM and chatbot software provider— that governs the relationship between the software provider (as the processor) and the client firm (as the data controller). These documents are generally referred to as data processing agreements or addendums. They contain standard terms that the clients of these software companies cannot negotiate. Additionally, the evaluation includes information publicly available on each provider’s website, particularly concerning data protection practices, security measures, and the resources offered to clients. Unfortunately, leading solutions provided by companies such as Kore.ai, Cognigy, and Boost.ai were not assessed here since the data processing agreement is not publicly available.

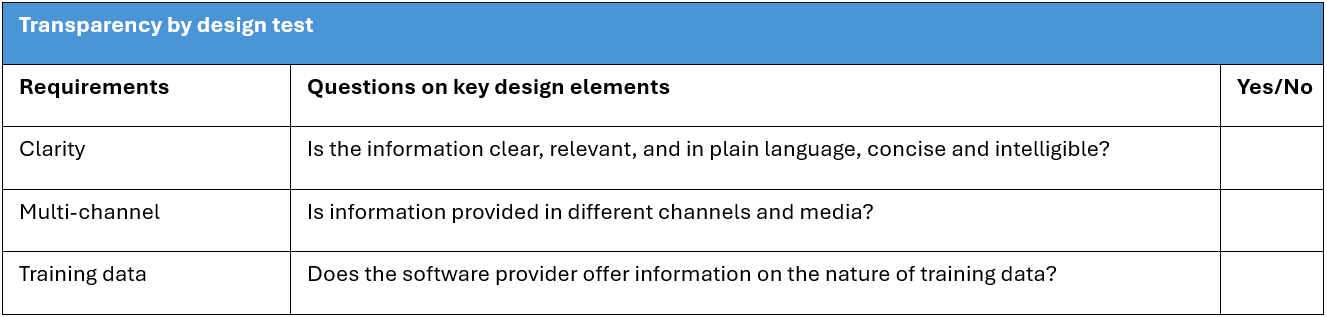

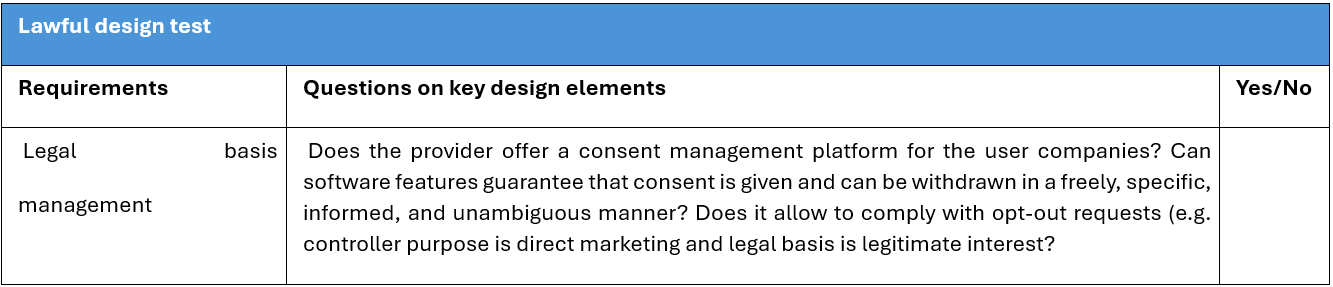

Based on the Guidelines on Article 25: Data Protection by Design and by Default by the EDPB (2020), we developed a series of yes-or-no questions to evaluate compliance with GDPR principles by identifying key design elements. Additional questions were included to assess the disclosure of training data, transparency regarding tracking and profiling, and the support provided to clients in conducting a Data Protection Impact Assessment (DPIA).

The questions were designed based on the principles outlined in Article 5 of the GDPR, except for accuracy, purpose limitation, and accountability. The principle of accountability was excluded from the survey (as well as from the EDPB guidelines) because it is considered an overarching concept, as it requires data controllers to choose and demonstrate appropriate measures for protecting personal data. The principle of accuracy was addressed under user autonomy within the fairness principle, focusing only on how easily users can exercise their right to rectify data. Lastly, the principle of purpose limitation was not included, as the purpose of data processing is typically defined by the service agreements established between the parties.

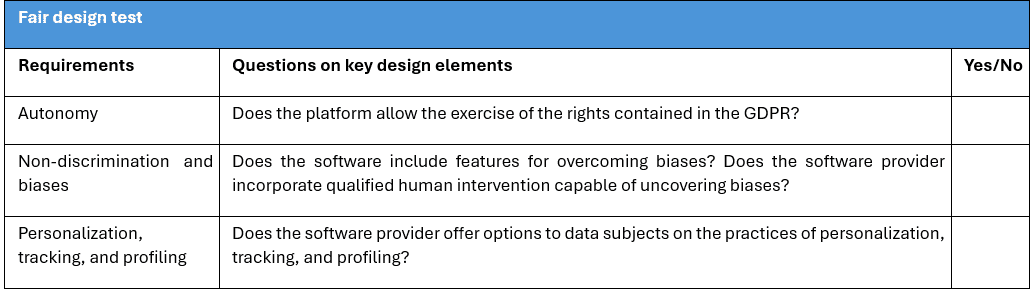

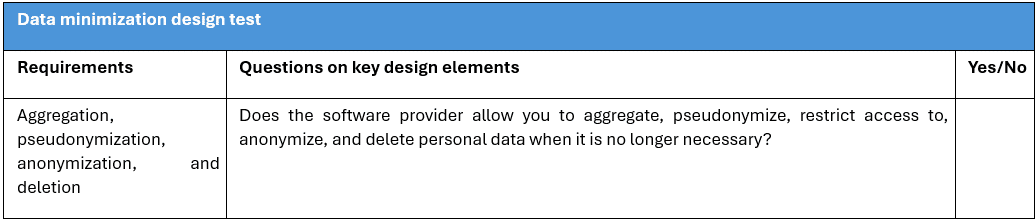

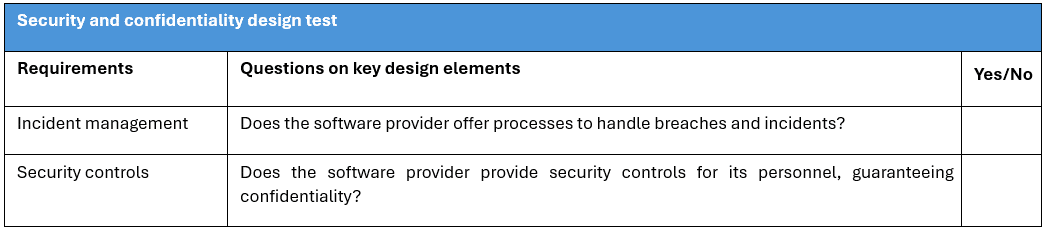

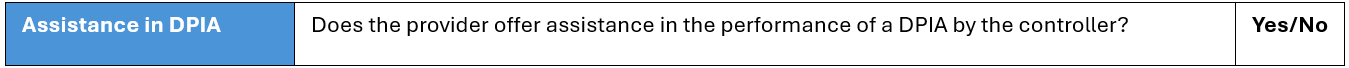

The following questions assess how key design elements are implemented to incorporate GDPR principles into our case study:

Figure 2. Transparent design test – Adaptation of EDPB (2020).

Figure 3. Lawful design test -Adaptation of the EDPB (2020)

Figure 4. Fair design design test – Adaptation of the EDPB (2020).

Figure 5. Data minimization design test – Adaptation of EDPB (2020).

Figure 6. Integrity and confidentiality design test – Adaptation of the EDPB (2020).

Figure 7. Assistance in DPIA.

Results of the survey

The following figures summarize the results of the survey:

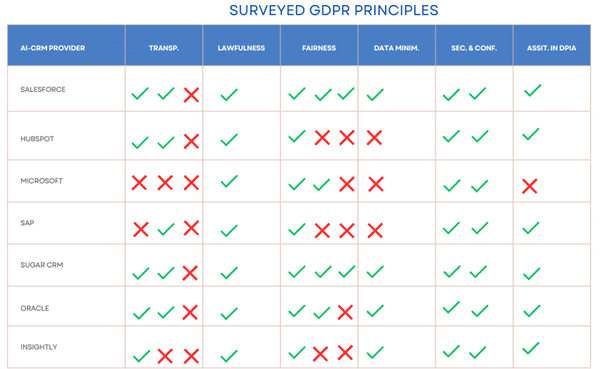

Figure 8. Results survey AI-CRM

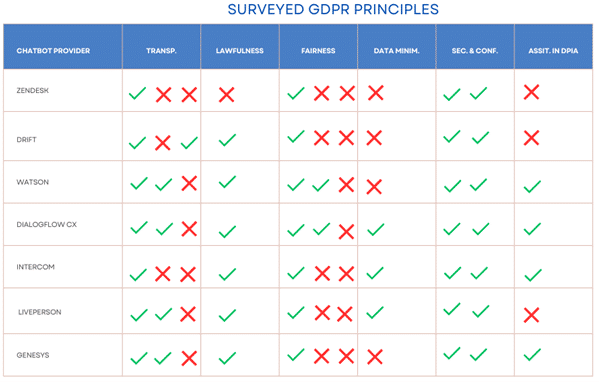

Figure 9. Results survey chatbots

The analysis of CRM and chatbot providers shows uneven adherence to GDPR principles, with clear strengths in transparency and lawfulness but notable gaps. While most providers present data protection information accessibly, the complex legal language in data protection documents often hinders understanding for non-legal professionals. Companies like Drift and Zendesk excel in clarity, while Salesforce, SugarCRM, and Genesys enhance usability through supportive resources. Consent management tools are commonly offered, with Salesforce and HubSpot standing out for supporting multiple legal bases for personal data processing, especially for direct marketing. However, a major weakness is the widespread lack of transparency around AI training data—only Drift discloses this, not for legal compliance but for advertising purposes i.e., “Drift’s conversational AI is trained on over 6 billion conversations to identify the patterns that engage and convert visitors”(Drift, s. f.). This confirms broader concerns around AI training practices, such as those faced by OpenAI, and raises important questions about data protection and the legitimacy of data sources.

Fairness is addressed through mechanisms for handling data subject requests, with Salesforce notably aligning its features with GDPR rights to enhance user interaction and client compliance. However, tools to detect AI bias are rare, only Salesforce and SugarCRM offer concrete solutions, while others like Microsoft and IBM remain vague.

For data minimization, programmatic data deletion is best practice, but approaches vary. While some tools like encryption and data masking exist, there is no consistent support for limiting clients’ data collection. Security measures are more uniform. All providers report breach protocols and confidentiality protections, though Oracle and LivePerson raise concerns with broad employee data processing.

Support for Data Protection Impact Assessments (DPIAs) is limited, only four of fourteen companies explicitly commit to helping clients. CRM providers offer more transparency and tools than chatbot providers, many of which lack accessible documentation.

Salesforce and SugarCRM stand out for compliance, offering tools and content that align with or exceed GDPR requirements. In contrast, the overall application of GDPR principles in AI-based CRM and chatbot systems remains inconsistent, especially regarding data minimization and DPIAs. This inconsistency may stem from limited guidance under Article 25 of the GDPR. Still, it is clear that embedding data protection into software design has tangible benefits: it empowers users to exercise their rights and simplifies compliance for client organizations, both of which are decisive to fostering trust and satisfaction in digital services.

Limitations

One limitation of this study is the potential for virtue signaling, as it relies on companies’ declared data protection practices, which may not reflect actual implementation. To address this, future research could explore clients’ perceptions of how effectively these tools function in practice.

Discussion, conclusions, and recommendations

This series has highlighted that software providers play a crucial role in ensuring compliance with GDPR principles and in facilitating the effective exercise of data subjects’ rights. The spirit of data protection by design suggests that data protection should be a central consideration when developing solutions for its processing. Therefore, a critical point must be made: the scope of Article 25 of the GDPR is narrow, as it only mandates data controllers to implement data protection by design, missing a critical opportunity to require software developers, as data processors, to embed data protection directly into the core of their products. As a result, the GDPR overlooks the potential chain reaction that a data protection-compliant solution can create in advancing the legislation’s broader objectives.

In other words, ensuring data protection rights—along with fundamental freedoms such as autonomy and freedom of thought—begins much earlier in the process than the GDPR currently envisions. Consequently, our findings highlight the efforts of companies that prioritize data protection during the design phase, positioning themselves as strategic enablers of compliance for clients who adopt their solutions.

The limited guidance provided by the GDPR regarding the implementation of data protection by design model leaves a wide margin of discretion for structuring data protection-aware products. This has led to significant creativity and interdisciplinary collaboration among providers, who offer practical tools that enhance their customers’ data protection capabilities. However, these tools can only achieve their full potential if client organizations are knowledgeable about them and committed to applying them effectively.

Once again, the lack of direction established in the GDPR underscores the importance of these results. The vagueness of Article 25 highlights the need to explore how developers of marketing solutions contribute to protecting data subjects. This is especially relevant in marketing, a rapidly evolving discipline eager to incorporate data-based technologies such as artificial intelligence. At the same time, it acknowledges the challenges marketers face in balancing data protection with customer engagement.

Among the key findings, we draw attention to the fact that not all companies make their Data Processing Agreements (DPAs) publicly available, nor do they offer substantial information on the data protection features embedded in their software. This information should be a critical factor when businesses select chatbot or CRM solutions. Considering that the solutions analyzed are intended for direct marketing, providers should include tools for managing various legal bases for processing, such as legitimate interests. Additionally, most providers offer explicit support for clients conducting DPIAs.

In terms of fairness, two companies stand out for offering tools that help clients address biases in their datasets. This is an innovative example of creating value-sensitive tools that can build trust in AI. A survey by Geraghty & Intercom, Inc. (2021) found that around 80-90% of organizations have encountered issues with biases in their AI models, underscoring the potential impact on fundamental rights like non-discrimination.

Transparency concerning training data is a compelling issue briefly explored in this research. This point will be extensively discussed in the coming years, especially considering the upcoming AI Act. Furthermore, the discussion goes beyond the realm of data protection, touching on fields such as copyright.

All the companies surveyed have implemented procedures to handle security breaches and incidents, as well as technical and organizational measures to ensure the confidentiality of processed data. Regarding data minimization, providers have developed diverse technical tools to comply with this principle, which deserves further technical analysis to evaluate their effectiveness.

For providers, we recommend drafting their DPAs in simpler, more accessible language, minimizing the use of legal jargon to ensure that all departments within client organizations can easily understand them. Using clear, straightforward terms would particularly benefit small and medium-sized enterprises (SMEs) that may lack dedicated legal or data protection teams. Contextual transparency is, therefore, a key consideration for ensuring both compliance and respect for data protection in processing activities.

Another recommendation for providers is to align their features with the principles of the GDPR. This approach will help companies identify the functionalities they need to implement in order to demonstrate compliance.

A significant takeaway is the need for companies to be discerning when selecting chatbot or CRM providers based on their data protection features. In simple terms, choosing software that prioritizes data protection will make it easier for companies to meet the requirements of Data Protection Authorities (DPAs) and respond effectively to consumer and buyer requests. We praise those providers who have created resources like blogs and tutorials to guide their clients in adopting data protection-respecting practices.

Further research in the direction outlined in this series can provide insights for data protection authorities, legal scholars, and civil society, helping them understand how data protection by design is being implemented in practice and which tools the industry is developing to facilitate compliance with data protection principles. The findings could lead to the identification of best practices and effective features, which might eventually form industry standards, taking into account implementation costs and the current state of the art. We also suggest extending this research methodology to other data-intensive industries increasingly reliant on AI, such as fintech, healthcare, retail, and government services.

Finally, data protection-enabling solutions can play a key role in alleviating marketers’ concerns about a conflict between data protection compliance and the success of their campaigns. As Ann Cavoukian (2010) argued, data protection by design aims to eliminate these false dichotomies by integrating data protection into the development and use of personal data processing solutions.

Conclusions of this blog series

AI-driven marketing presents significant data protection risks to consumers and buyers, including profiling, surveillance, and the manipulation of emotions and thoughts through the arbitrary exploitation of personal data. As such, software providers that develop marketing solutions play a critical role in enabling compliance with GDPR principles and ensuring the effective exercise of data subjects’ rights. The principle of data protection by design underscores that data protection must be a primary consideration during the development of any solution used to process personal data.

Given this context, the findings indicate that the surveyed leading CRM and chatbot providers have not widely or adequately implemented data protection by design in their solutions. From the perspective of GDPR principles, a fragmented and inconsistent application is evident, likely due to the lack of clear guidance in Article 25 of the legislation. The analysis identified specific functionalities and features offered by vendors to help their customers conduct and demonstrate data processing practices that respect data protection.

On a positive note, the survey revealed that all providers implement technical and organizational measures to safeguard the security and confidentiality of the data they process. Most solutions support the exercise of data subjects’ rights, and leading providers offer tools to address bias in datasets, which contributes to fairness and trust in AI. However, there are several areas of concern. Transparency regarding Data Protection Authorities (DPAs) and other data protection-related information is often lacking, some information is not publicly accessible or is presented in overly complex, legalistic language. Additionally, none of the providers disclosed details about the data used to train their systems. Only a few providers allow the management of legal bases for processing beyond consent, such as legitimate interests, which could offer practical benefits for marketers.

It is important to highlight that Article 25 of the GDPR has a limited scope, as it only obliges data controllers to implement data protection by design. This represents a missed opportunity to also require software developers and other data processors to integrate data protection at the core of their products.

Therefore, software designers should prioritize data protection when developing AI-powered marketing solutions. Their products not only support the exercise of data subjects’ rights and facilitate compliance for clients, but also enhance client satisfaction and brand reputation.

Furthermore, as SugarCRM, one of the key companies analyzed in this study, states: “With so much personal data being collected, stored and accessed inside the system – you really need to trust your CRM vendor” (2018). Then, software developers are key actors in safeguarding fundamental rights in the digital age, including the rights to privacy and data protection. This is because their solutions, and the practical features they incorporate, will be used by thousands of data controllers around the world.

Bibliography

AI Multiple. (2023). AI-powered CRM Systems in 2023: In-Depth Guide. https://research.aimultiple.com/crm-ai/

Drift. (s. f.). Drift Everything starts with a conversation. Recuperado 22 de junio de 2023, de https://www.drift.com/

European Data Protection Board (EDPB). (2020). Guidelines 4/2019 on Article 25 Data Protection by Design and by Default. https://edpb.europa.eu/sites/default/files/files/file1/edpb_guidelines_201904_dataprotection_by_de sign_and_by_default_v2.0_en.pdf

Forbes Advisor. (2023). 7 Best Chatbots. Forbes. https://www.forbes.com/advisor/business/software/best-chatbots/

Gartner Inc. (2023). Reviews for Enterprise Conversational AI Platforms Reviews 2023. Gartner. https://www.gartner.com/market/enterprise-conversational-ai-platforms

Geraghty, L., & Intercom, Inc. (2021, julio 15). IBM’s Arin Bhowmick on designing ethical AI. The Intercom Blog. https://www.intercom.com/blog/podcasts/arin-bhowmick-ibm-on-ethical-ai/

Insightly. (s. f.). Best-In-Class Marketing Automation Software | Insightly. Recuperado 5 de julio de 2023, de https://www.insightly.com/marketing/

Michels, J. D., Millard, C., & Turton, F. (2020). Contracts for Clouds, Revisited: An Analysis of the Standard Contracts for 40 Cloud Computing Services (SSRN Scholarly Paper N.o 3624712). https://papers.ssrn.com/abstract=3624712

SugarCRM. (2018, marzo 22). Coming Soon: Innovation from SugarCRM to address Data Protection and GDPR. https://www.sugarcrm.com/blog/innovation-from-sugarcrm-to-address-data-protection-and-gdprcompliance/

TechTarget. (2022a). AI-powered CRM platforms compared. Customer Experience. https://www.techtarget.com/searchcustomerexperience/feature/AI-powered-CRM-platformscompared